The Rising Demand for Data: Oxylabs’ 2020 Trend Report

Adomas Sulcas

Last updated on

2020-02-13

3 min read

Oxylabs is proud to present its first annual trend report. The 20 page document, compiled by Oxylabs’ Data Analysis Department, provides unique insights and analysis of web scraping behavior throughout the year 2019, for the first time offering an in-depth, behind-the-scenes look into how market players from a variety of industries carry out their data gathering operations.

The findings of the report are based on aggregated internal data on scraping behavior of more than 500 clients, detailing the trends in use of our datacenter, residential proxies and Real-Time Crawler (now known as a Web Scraper API). What’s more, the report also makes forecasts as to what web data gathering trends can be expected during 2020.

Gain a broader understanding of how some of the most prominent companies in the world engage in web scraping by downloading the full report for free.

Free PDF

The Rising Demand for Data: Oxylabs’ 2020 Trend Report

Among its key findings, Oxylabs’ 2020 Trend Report reveals that compared to the prior year, there was a substantial growth in total request volume during 2019.

Datacenter proxy requests grew by 23%, while in the case of residential proxies, the growth was even more significant and reached 165%. It is safe to assume that this increased demand at least partially reflects the growing global demand for data.

In this article, we present the first part of the report. In the upcoming weeks, the following parts will also be published one by one:

Datacenter Proxies Prove to Be Sufficient for the Majority of Web Scraping Tasks

Global Companies Indicate Increasing Demand for Residential Proxies

The Rising Need for Scraping Tools: Real-Time Crawler (now known as a Web Scraper API)

Web Scraping Trends Using Datacenter Proxies in E-commerce Market: 2017-2019 Case Studies

Emerging Markets of Asia and Northern America: Web Scraping Activity Rises

From all of us at Oxylabs, we hope you will find the knowledge presented in the report useful.

Data market overview

Unlike just ten years ago, today, the term “big data” is familiar to laymen and specialists alike. Back in 2015, Gartner, a global research and advisory firm, dropped “big data” from its popular Hype Cycle methodology, claiming that no longer a hyped technology with untested value, it instead became the new normal.

Interestingly enough, Google Trends’ U.S. 2010-2020 search data corroborates Gartner’s position by showing that the term received peak interest in October 2014 and then steadily declined in popularity in the upcoming years.

Popularity of the term “big data” in the U.S. Source: Google Trends, 2010-2020 data.

Not as new and exciting, big data matured and branched into other areas, such as data science and business intelligence, becoming irreplaceable and delivering tangible value for businesses in all types of industries worldwide.

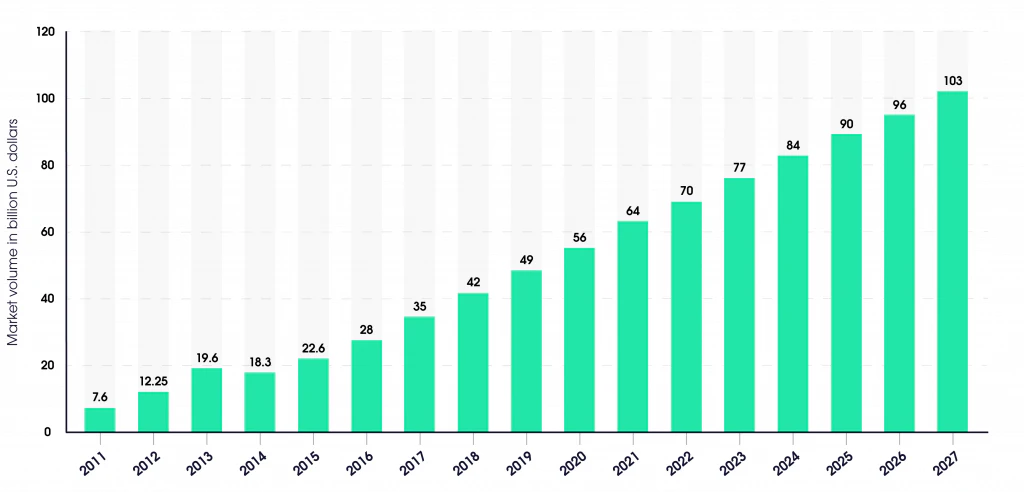

Proving the point, the big data analytics industry continues to grow steadily. In fact, in 2019, it reached a value of $49 billion. Assuming a steady growth rate, it should more than double in just seven years, reaching an impressive $103 billion by 2027.

Forecast of Big Data market size, based on revenue, 2011-2027. Source: Statista (2018)

The demand for data and data analysis for business intelligence is perfectly illustrated by the fact that data scientists are currently one of the most in-demand specialist positions around the world, and this demand is growing faster than ever before.

For example, LinkedIn’s 2020 U.S. job trends report features the increasing demand for artificial intelligence and data science positions as the main 2020 job trend, claiming that these roles “continue to proliferate across nearly every industry.”

According to an industry report by Fortune Business Insights, some of the key market drivers accounting for this growth are AI solution implementation, customer and operational analytics, the latter defined by Techopedia as “a type of business analytics which focuses on improving existing operations.”

Naturally, with the increasing demand for data by businesses worldwide, web scraping, the primary method of collecting public data from online sources, is also experiencing steady growth in popularity.

Especially for online-based businesses, such as e-commerce sites or travel fare aggregators, web scraping is an integral part of operations. Even more, it is now established as a standard practice in multiple use cases across various industries, for example, as a method to ensure that product prices are in line with the market average and determine which products have the highest demand. Forward-looking companies also leverage scraped data to ensure higher SERP ranking by optimizing their SEO efforts or check whether their ads are displayed correctly in multiple locations, among many other uses.

About the author

Adomas Sulcas

Former PR Team Lead

Adomas Sulcas was a PR Team Lead at Oxylabs. Having grown up in a tech-minded household, he quickly developed an interest in everything IT and Internet related. When he is not nerding out online or immersed in reading, you will find him on an adventure or coming up with wicked business ideas.

All information on Oxylabs Blog is provided on an "as is" basis and for informational purposes only. We make no representation and disclaim all liability with respect to your use of any information contained on Oxylabs Blog or any third-party websites that may be linked therein. Before engaging in scraping activities of any kind you should consult your legal advisors and carefully read the particular website's terms of service or receive a scraping license.

Related articles

A Decade of Building the Data Infrastructure for Tomorrow: Oxylabs' 2025 Impact Report

Oxylabs Explains

2026-06-02

Extract web data at scale

Let's discuss how Oxylabs can meet your data acquisition demands by providing the right tools.

Get the latest news from data gathering world

Scale up your business with Oxylabs®

Proxies

Advanced proxy solutions

Data Collection

Datasets

Resources

Innovation hub

Extract web data at scale

Let's discuss how Oxylabs can meet your data acquisition demands by providing the right tools.