Data extraction is at the core of many different businesses: from finance to e-commerce companies, and everything in between. Data extraction tools can help automate various tasks and save companies money, time, and human resources. In this article, we will discuss the importance of data extraction, have a look at the use cases, review different tools, and go through the challenges of this process.

If you are wondering whether your company should extract data, here you will find all the necessary information.

What is data extraction?

It is part of the Extract, Transform and Load (ETL) process that is the core of data ingestion. Data ingestion is an important part of business strategy, since it allows accessing collected information for immediate use or importing it to be stored in a database.

What is data extraction used for?

As the data extraction definition suggests, this process is used in order to consolidate and refine information, so it could be stored in a centralized location and later transformed into the required format.

Many companies extract data and use it in their business. In this article, we will cover various use cases in more detail, but first, let’s find out more about data structures and extraction methods. Please note that in this article we only talk about public data extraction. The data that is not public can only be scraped with a clear consent of the owner, or if this data belongs to you.

Types of data structures and extraction methods

Unstructured data refers to information that lacks basic structure. In order to extract such data, it has to be formatted or reviewed before the extraction. This may include cleaning up, for example – deleting duplicate results, removing unnecessary symbols and whitespaces.

Structured data is usually already formatted for use and does not have to be additionally manipulated.

Full extraction allows completely extracting data from the source, and is used when acquiring information for the first time. Some sources cannot identify changes, so in order to receive up-to-date information, the entire dataset has to be reloaded.

Incremental extraction involves tracking information changes and does not require extracting all the data from the target each time there is a change. However, this method may not detect deleted records.

Data extraction process

So how is data extraction done? In most cases, the data extraction process from a database or a software-as-a-service (SaaS) platform involves three main steps:

Looking for structure changes, i.e. new tables, fields, or columns.

Retrieving target tables (or fields, or columns) from the records specified by the integration’s replication scheme.

Extracting appropriate data, if it is found.

Collected information is then uploaded to a data warehouse.

If you would like to learn more about the data extraction process, read how to extract data from any website? where we covered every step in detail.

Why is data extraction important?

According to McKinsey Digital’s report, CEOs spend more than 20% of their time on tasks that could be automated, for example, collecting information for status reports and analyzing operational data. Data extraction can help systematize information and automate time consuming tasks as well as help companies achieve other benefits, for example:

Improve accuracy and reduce human error

Data extraction tools automate repetitive data entry processes. Automation can improve accuracy of data inputs and reduce human errors.

Increase productivity

Manually entering large amounts of data is a daunting task for many, since it is repetitive and hardly stimulating. Removing the task of manually entering data means employees can spend more time on other duties that they find more motivating.

Increase data availability

Extracted and stored data can be visible and available to anyone in your team who needs it much faster. Information can be accessed whenever needed, without having to wait for someone to upload it into the system manually.

Save businesses time and money

All of the above points add up to one of the most important benefits of data extraction: it helps companies save time and money. Automated processes require less human resources and allow the staff to focus on data analysing and other tasks.

Use cases: what companies utilize data extraction?

Various companies extract data to simplify and automate tasks. Other businesses collect information from different sources in order to make it available for others. Here are some examples of businesses that perform data extraction operations at scale:

E-commerce companies. In order to stay in the competitive market, e-commerce businesses extract product and pricing information from various sites. Sometimes, this information is used to implement dynamic pricing strategies in order to increase revenue.

Financial firms use data extraction to generate financial reports and statements. Gathering financial data is often challenging since it may be documented in diverse file formats, but it is extremely important in building predictive analysis.

Companies that provide public statistical information also use data extraction. For example, government organizations that collect and store statistical data.

Data science-focused companies collect large amounts of information for machine learning training models. These models use data to study the patterns and build their own logic.

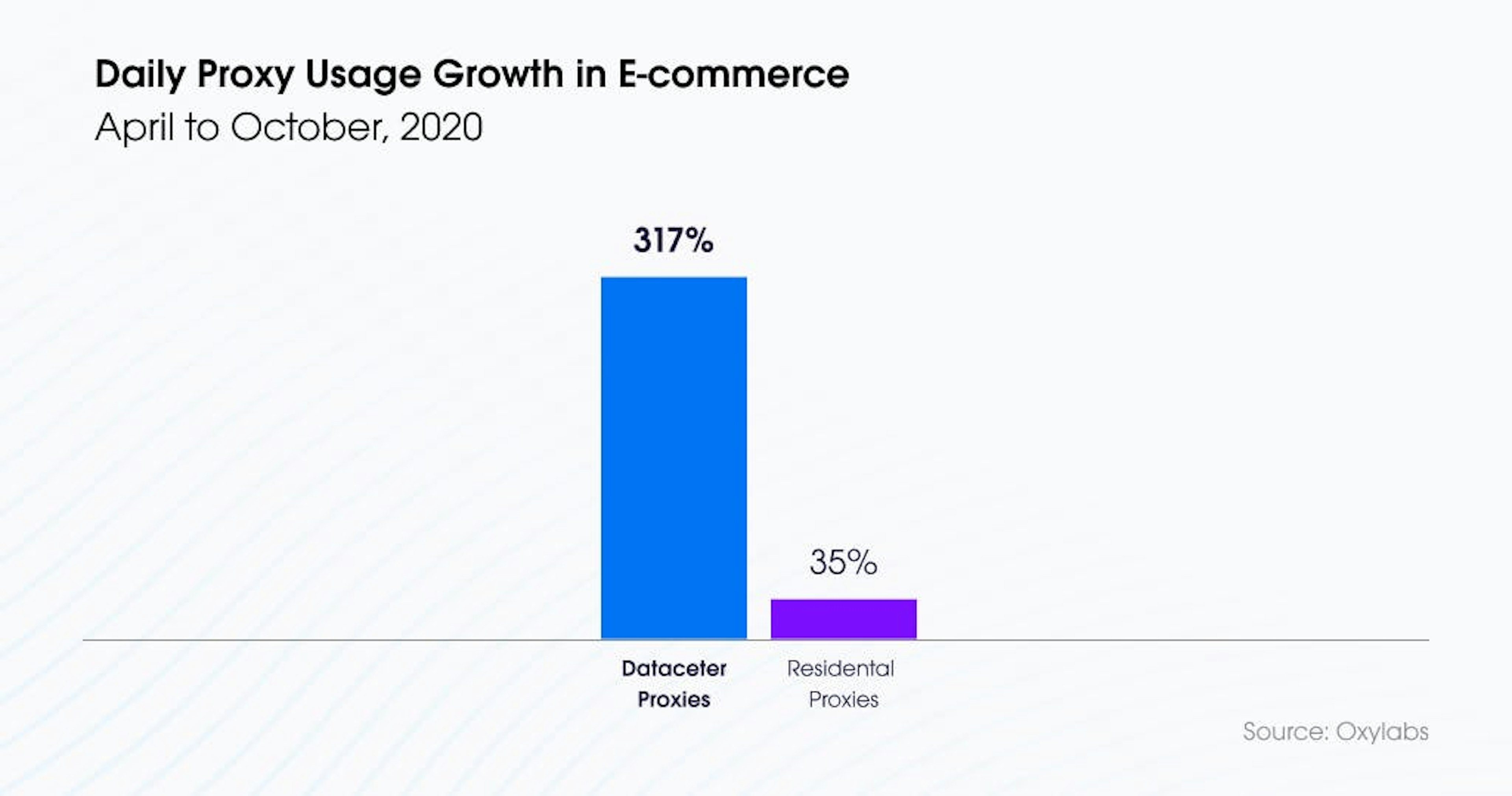

E-commerce companies use proxies for data extraction

Data extraction tools

There are three main types of data extraction tools. Each type of tool benefits companies in different ways.

Batch data extraction tools

Batch extraction runs on a time interval and can run as frequently as required. Batch extraction tools consolidate data in clusters, and usually do it in off-peak hours, in order to minimize the disturbance.

Open source tools

Using open source tools requires supporting infrastructure and knowledge in place, but can be a good budget-friendly solution.

Cloud-based tools

Cloud-based tools are a good solution for companies that wish to cover all ETL processes in one place. Cloud platforms often offer data storage, analysis and extraction. Cloud-based tools do not require an in-house team of experts, so it can be a good option for smaller companies.

Data extraction challenges

Extracting data from complex pages

Most web scraping tools may not be able to extract information from complex websites. If you are looking to gather public information from complicated pages, you need to find a powerful web scraper that can return results without page blocks and breakdowns. An example of such tools is our Web Scraper API. It helps extract public data and return easy to read, already parsed results. This tool is a great solution for companies of all sizes.

Joining data from different sources

When gathering large amounts of data, it is inevitable that information comes from different sources. Joining data together is a challenge, especially if parts of it are structured, and others are unstructured. Solving this challenge requires a lot of planning.

Data security

Extracted data may include sensitive information. If the data you wish to extract is regulated, it may have to be removed. Moving data may also require extra security measures, for example, it may have to be encrypted during transit.

Conclusion

This article covers all the basics a CEO or any other person in a company should know about data extraction. We discussed all the main information, including what is data extraction, how businesses benefit from gathering public data, and what are the main challenges of this process.

If you are ready to take up data extraction for your business, read an article about crawling a website without getting blocked, AI scraping, or web scraping in R, and prepare to gather loads of useful public information for your company! Or, if you're interested in starting data analysis to get valuable insights right away, check out our datasets solution.

Forget about complex web scraping processes

Choose Oxylabs' advanced web intelligence collection solutions to gather real-time public data hassle-free.

About the author

Adelina Kiskyte

Former Senior Content Manager

Adelina Kiskyte is a former Senior Content Manager at Oxylabs. She constantly follows tech news and loves trying out new apps, even the most useless. When Adelina is not glued to her phone, she also enjoys reading self-motivation books and biographies of tech-inspired innovators. Who knows, maybe one day she will create a life-changing app of her own!

All information on Oxylabs Blog is provided on an "as is" basis and for informational purposes only. We make no representation and disclaim all liability with respect to your use of any information contained on Oxylabs Blog or any third-party websites that may be linked therein. Before engaging in scraping activities of any kind you should consult your legal advisors and carefully read the particular website's terms of service or receive a scraping license.

Related articles

What Is Data Grounding in AI? A Complete Guide

Shinthiya Nowsain Promi

2026-05-22

Public Data Acquisition Solutions for Enterprise: A 2026 Selection Guide

Maryia Stsiopkina

2026-05-04

Get the latest news from data gathering world

Scale up your business with Oxylabs®

Proxies

Advanced proxy solutions

Data Collection

Datasets

Resources

Innovation hub

Forget about complex web scraping processes

Choose Oxylabs' advanced web intelligence collection solutions to gather real-time public data hassle-free.