Buy proxy servers from $1.2/IP

✔No hidden fees ✔Free geo-targeting ✔The largest selection of locations

Residential IPs

175M+ Residential IPs

Fastest proxies in the market

Real profile, human-like Residential IPs

Mobile IP Proxies

3G/4G/5G/LTE Mobile proxies

Automatic mobile IP address rotation

Fewer IP blocks and CAPTCHAs

Datacenter IPs

188 available countries

Free city and state targeting

Pre-selected and tested proxies

ISP Proxies

Tailored for most difficult targets

Unlimited-duration sessions

Premium ASN providers

Dedicated ISP Proxies

Dedicated IPs for complete control

Unlimited session duration and bandwidth

Premium ASN provider selection

Enterprise Dedicated Datacenter Proxies

If you require more than 3000 IPs, contact our Sales team for a custom solution.

Residential Proxies

Human-like web scraping without IP blocking. Choose paid proxy servers to collect public data from almost any website worldwide with pinpoint targeting precision.

Use a pool of 175M+ residential IPs

Collect large amounts of public data

Forget about blocks and CAPTCHAs

Best for: Review monitoring, ad verification, travel fare aggregation, cybersecurity.

Dedicated Datacenter Proxies

The highest-performing proxies on the market. Perform large-scale web scraping at high speed with private proxies.

2M+ dedicated IPs

Unlimited sessions, bandwidth with fair usage, and targets

Exceptional speeds with avg. 99.9% uptime

Best for: Market research, brand protection, email protection, cybersecurity.

Proxy server locations

Benefits of Oxylabs proxy solutions

Reliable infrastructure

We conduct 24/7 system maintenance, ensuring a ∼99.95% success rate.

24/7 support

Get technical help day and night from our dedicated support team.

Large proxy pool

We upkeep one of the largest proxy pools in the market with 177M+ IPs.

Fast response times

The market-leading response times averaging 0.6 seconds.

Effortless integration

Proxy authentication methods and use instructions covered in documentation.

Free geo-targeting

Filter IP addresses by country, city level, state, continent, coordinate, ASN.

Ethically produced

Top-tier quality proxy servers coming from legitimate sources.

Automatic IP rotation

Maintain the same IP or switch with every request, depending on your needs.

Simple integration

Oxylabs proxies integrate seamlessly with third-party applications.

Residential Proxies:

Dedicated Datacenter Proxies:

Take a look at the integration examples to the right.

import requests username = "customer-USER" password = "PASS" proxy = "pr.oxylabs.io:7777" proxies = { 'http': f'http://{username}:{password}@{proxy}', 'https': f'http://{username}:{password}@{proxy}' } response = requests.request( 'GET', 'https://ip.oxylabs.io/location', proxies=proxies, ) print(response.text)

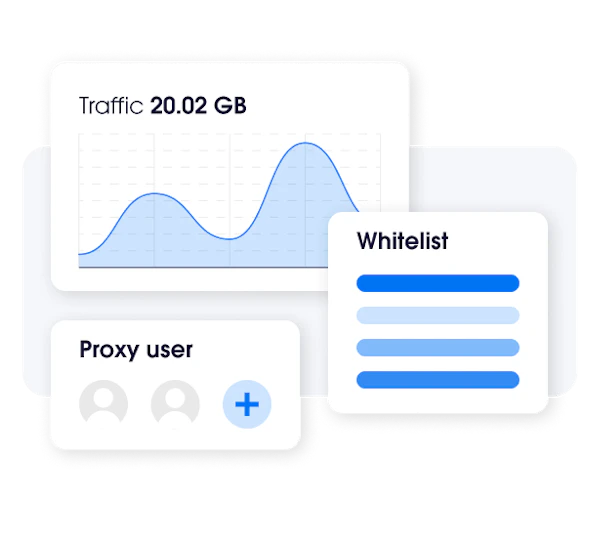

All-in-one dashboard

For ease of use, you can find all the account management options in the Oxylabs dashboard. Here, you can:

View usage statistics

Manage subscriptions

Create proxy users

Invite colleagues for collaboration

Whitelist IPs

Change user plans

Top-up your traffic

Set usage limits

Highly trusted by top enterprises

Discover what our customers are saying about us. Join the ranks of those who have chosen us and trust our commitment to excellence.

Proxy service performance compared

Residential IPs

175M+

150M+

Success rate

99.82%

98.96%

Response rate

0.41 s

1.21 s

Residential IPs

175M+

150M+

Success rate

99.82%

98.96%

Response rate

0.41 s

1.21 s

*independent research by ProxyWay, 2024

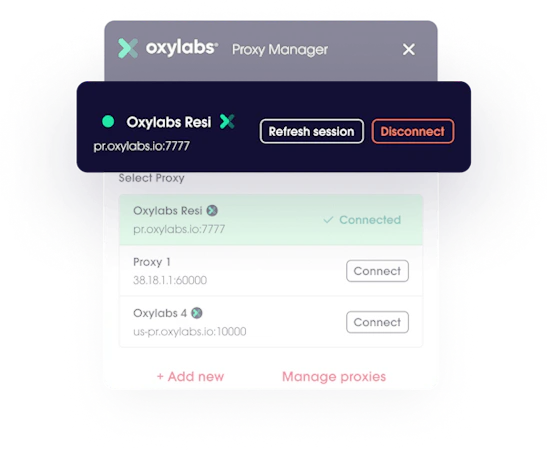

Get Oxy Proxy Extension for free

Oxylabs Proxy Extension is one of the many ways to make use of Oxylabs proxies. With it, you can quickly enable proxies for Google Chrome with a single click. The extension allows you to:

Add and switch between multiple proxies.

Maintain the same IP address for multiple requests.

Switch between proxy types and choose between SOCKS, HTTPS and HTTP proxies.

Add an unlimited number of proxies.

Use multiple proxy server providers.

Frequently asked questions

What is a paid proxy list?

Paid proxy servers offer all the premium proxies functions and supporting features you can expect. They typically provide faster connection speeds and better reliability, including vast global location selection and unlimited bandwidth.

Paid proxy services are not subject to the same usage limitations and bandwidth constraints as free proxy servers.

Can I test proxy servers before buying?

Is a VPN the same as a proxy server?

What are the risks of using free proxies?

Are proxy servers illegal?

Are paid proxies safe?

Can my IP be tracked if I use a proxy?

Can I make my own proxy server?

How much does a proxy service cost?

What is the best proxy server to buy?

What payment methods are accepted?

Can I cancel or refund my purchase?

What is a proxy server?

Can Oxylabs proxies be used as web proxy servers?

Can you be traced with a proxy?

What are the risks of using free proxy servers?

How to buy proxy server?

Get the latest news from data gathering world

Scale up your business with Oxylabs®

Proxies

Advanced proxy solutions

Data Collection

Datasets

Resources

Innovation hub