Scraping e-commerce websites is a common practice driven by several key reasons. It enables businesses to conduct market research, monitor prices, enhance product catalogs, generate leads, and aggregate content. Automating the data extraction process allows businesses to save time and resources while obtaining comprehensive and up-to-date information. In this tutorial, you’ll learn how web scraping e-commerce websites works using Python and Oxylabs’ Web Scraper API. This e-commerce scraping tool will help you avoid anti-bot protection and CAPTCHA without writing a complex script.

For your convenience, we also prepared this tutorial in a video format:

Let’s get started.

1. Project setup

First, you’ll have to install Python. Please download it from here.

2. Install dependencies

Next, you’ll need to install a couple of libraries to interact with our e-commerce product scraper and parse HTML. You can run the following command:

pip install bs4 requestsThis will install Beautiful Soup and the requests libraries for you. Beautiful Soup is the ideal library for scraping static HTML content and is particularly useful for extracting data from static pages.

3. Import libraries

Now, you can import these libraries using the following code:

from bs4 import BeautifulSoup

import requests4. Retrieve API credentials

Next, you’ll have to log in to your Oxylabs E-Commerce Scraper API account to retrieve API credentials. If you don’t have an account yet, you can simply sign up for a free trial. There, you’ll get the necessary credentials for the e-commerce scraper.

Once you've retrieved your credentials, you can add them to your code.

username, password = 'USERNAME', 'PASSWORD'Don’t forget to replace USERNAME and PASSWORD with your username and password.

5. Prepare payload

Oxylabs’ Web Scraper API expects a JSON payload in a POST request. You’ll have to prepare the payload before sending the POST request. The source must be set to universal.

url = "https://sandbox.oxylabs.io/products"

payload = {

'source': 'universal',

'render': 'html',

'url': url,

}render: html tells the API to run JavaScript when loading the web page content.

Note: For demonstration, we’ll scrape e-commerce data from the sandbox.oxylabs.io store page.

6. Send a POST request to the Web Scraper API

Let’s send the payload using the requests module’s post() method. You can pass the credentials using the auth parameter.

response = requests.post(

'https://realtime.oxylabs.io/v1/queries',

auth=(username, password),

json=payload,

)

print(response.status_code)Since the payload needs to be in JSON format, you can use the json parameter of the post() method. If you run this code now, you should see the output of the status_code as 200. Any other numbers indicate errors. If you get one, check your credentials, payload, and URL thoroughly and make sure they are all correct.

7. Parse Data

You can extract the HTML content from the JSON response of the API and create a Beautiful Soup object named soup.

content = response.json()["results"][0]["content"]

soup = BeautifulSoup(content, "html.parser")After extracting the product data, you may need to reformat certain fields, such as adjusting product URLs or cleaning up text, to ensure consistency. Once the data is properly reformatted, you can save it to a CSV file or database. Saving the extracted data in a structured format allows for efficient storage and further analysis.

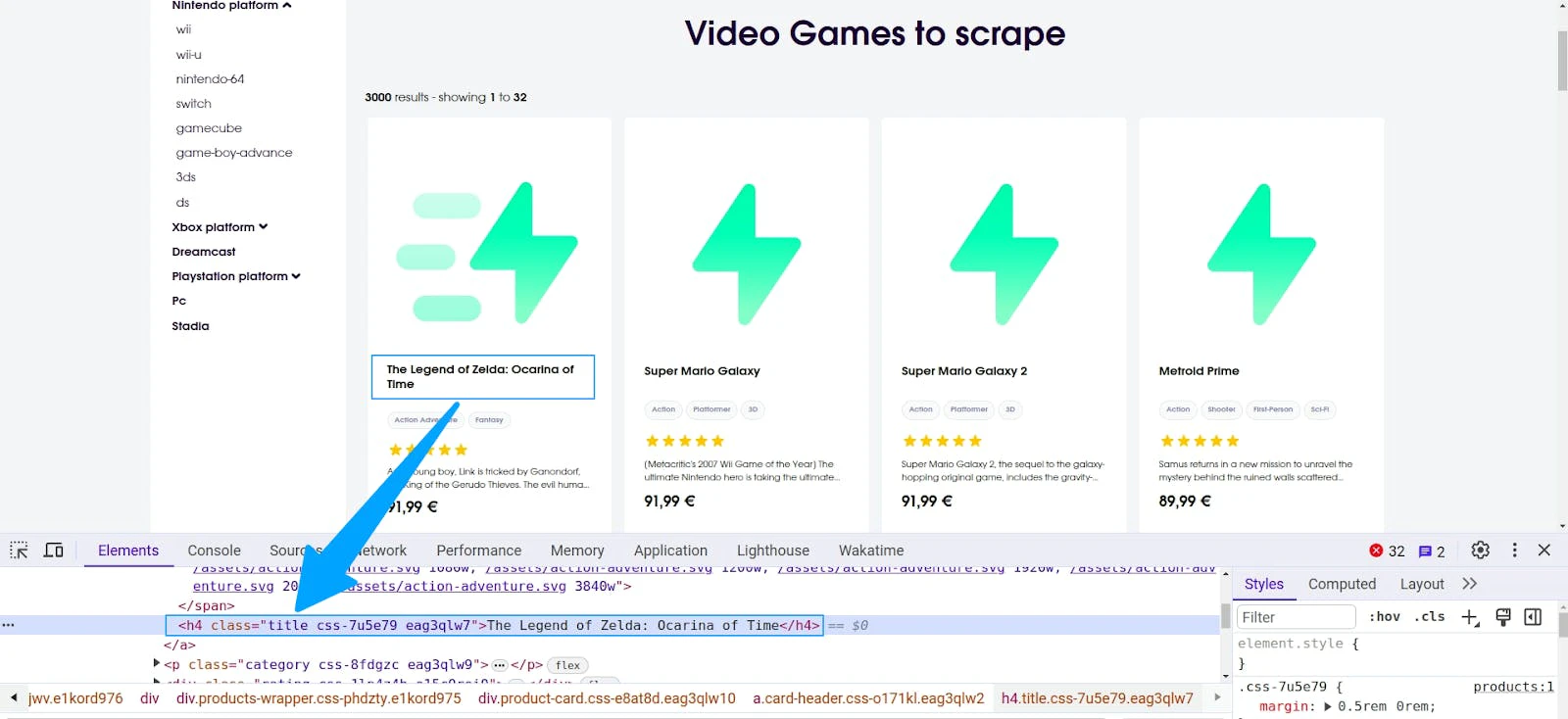

Using Web Browser’s developer tools, you can inspect the various elements of the website to find the necessary CSS selectors. Once you've gathered the CSS selectors, you can use the soup object to extract those elements. To activate the developer tools, you can simply browse to the target web page, right-click, and select inspect. Let’s parse the title, price, and availability of all products.

Title

If you inspect the title, you’ll notice it’s inside a <h4> tag with a class title.

So, you can use the soup object to extract the title as below:

title = soup.find('h4', {"class": "title"}).get_text(strip=True)Price

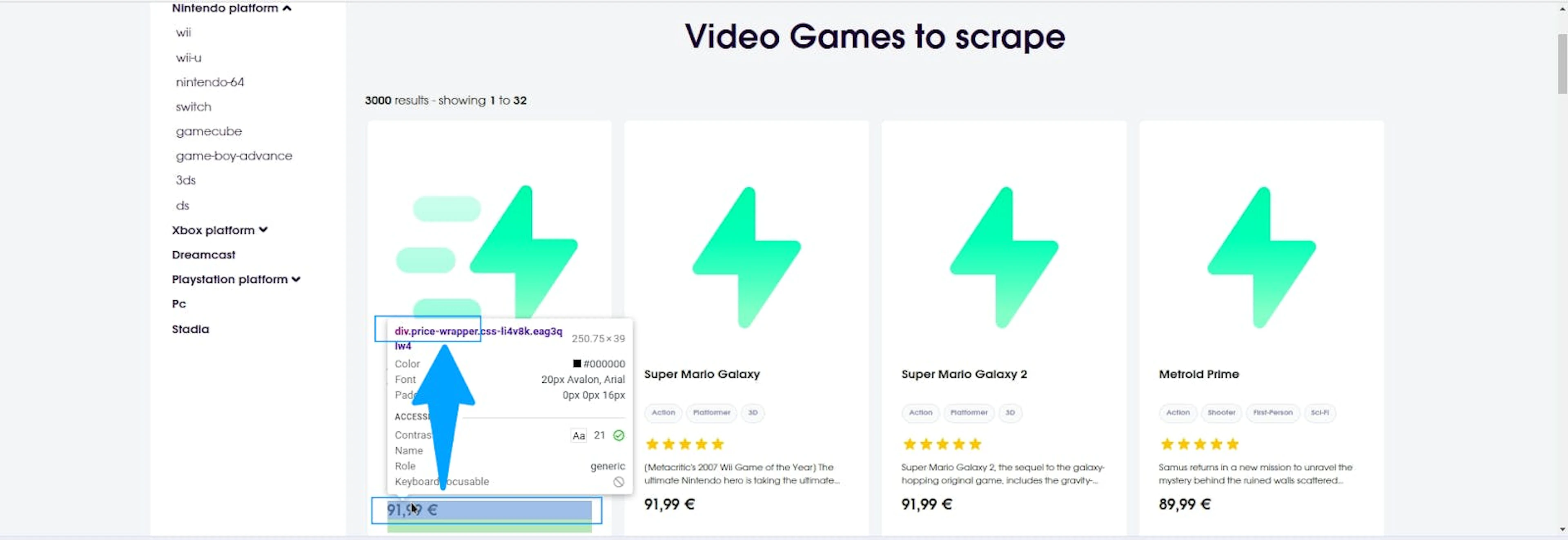

Similarly, inspect the price element.

As you can see, it’s wrapped in a <div> with the class price-wrapper. So, use the find() method, as shown below, to extract the price text.

price = soup.find('div', {"class": "price-wrapper"}).get_text(strip=True)Availability

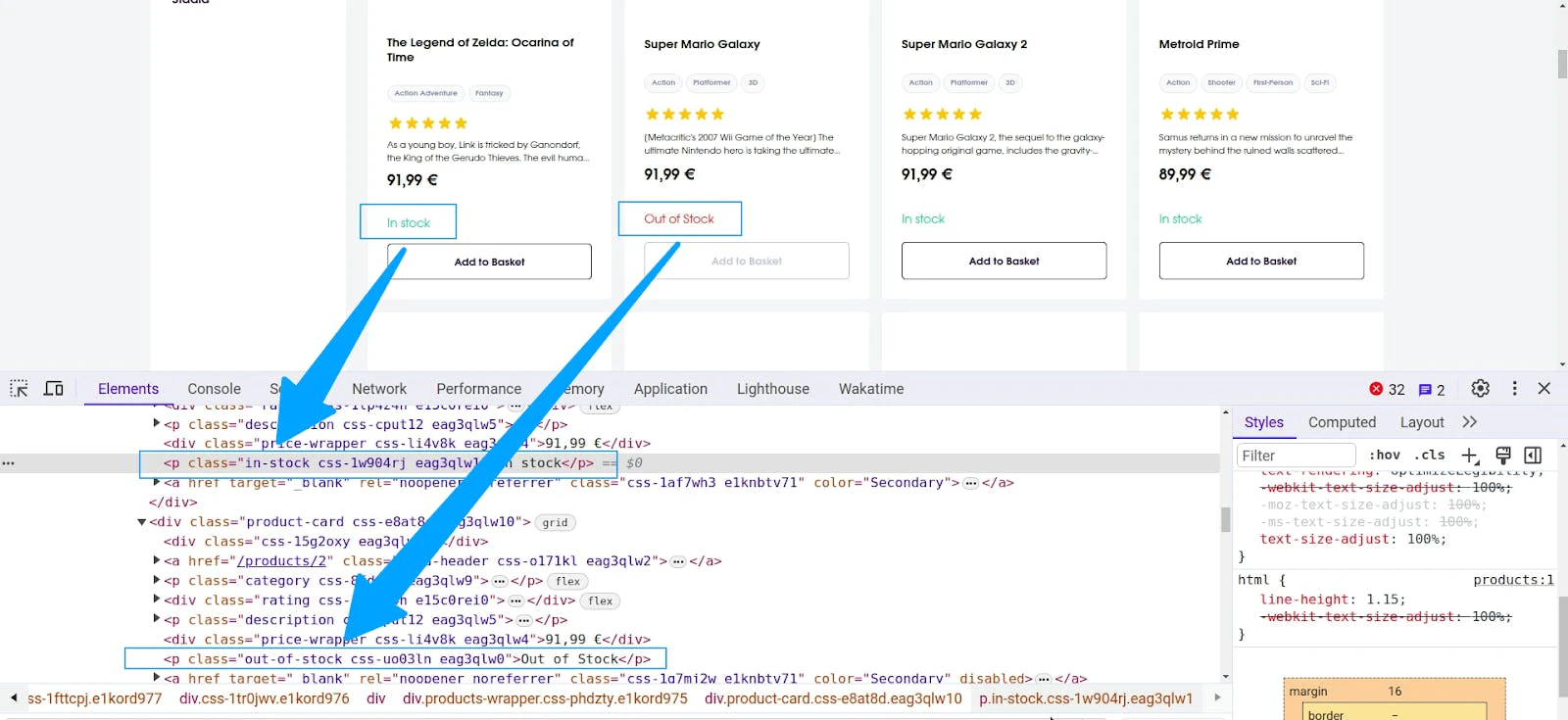

There are two types of availability on this website: In Stock and Out of Stock. If you inspect both elements, you’ll notice they have different classes.

Fortunately, the Beautiful Soup library’s find() method supports multiple class lookups. You’ll have to pass the classes in a list object.

availability = soup.find('p', {"class": ["in-stock", "out-of-stock"]}).get_text(strip=True)For more efficient scraping, take a look at the best practices of how to use find() & find_all() in BeautifulSoup.

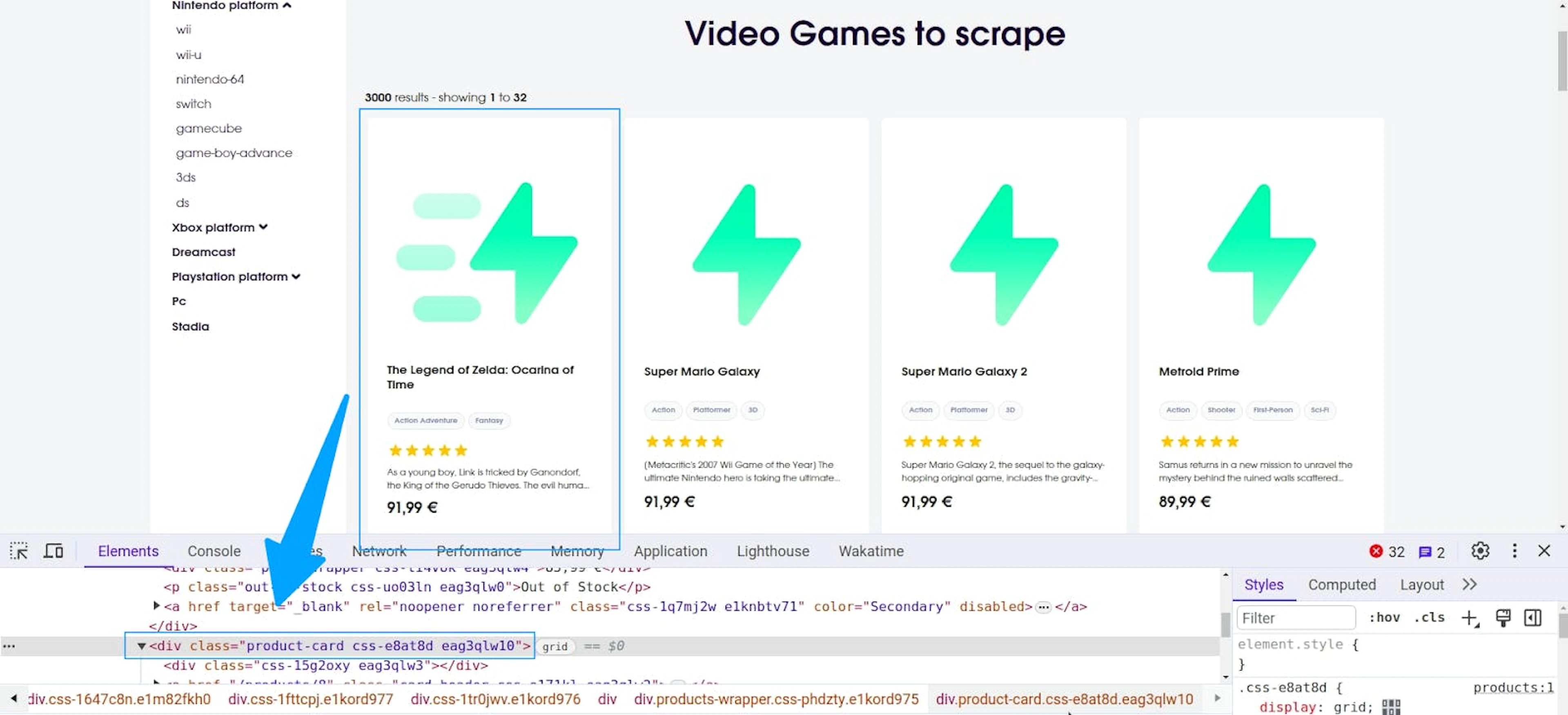

All products

To extract all product data, you’ll have to inspect the product elements and find the appropriate CSS selectors.

Since each of the product elements is wrapped in a <div> with class product-card, you can loop through each element using a for loop.

data = []

for elem in soup.find_all("div", {"class": "product-card"}):

title = elem.find('h4', {"class": "title"}).get_text(strip=True)

price = elem.find('div', {"class": "price-wrapper"}).get_text(strip=True)

availability = elem.find('p', {"class": ["in-stock", "out-of-stock"]}).get_text(strip=True)

data.append({

"title": title,

"price": price,

"availability": availability,

})

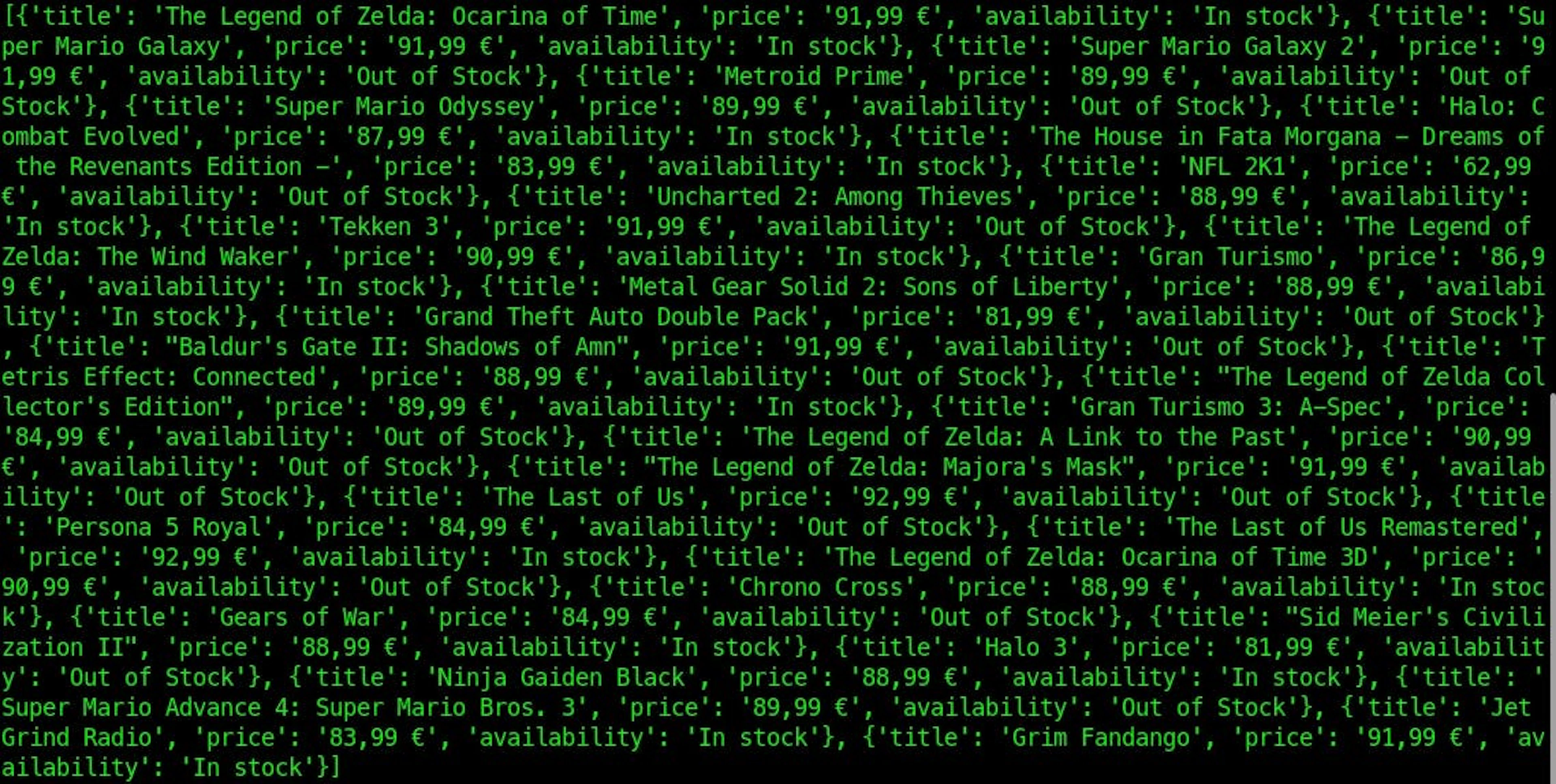

print(data)The data list will contain all the product data.

Instead of parsing with BeautifulSoup, you can generate a parser using the free Custom Parser feature. Then, as you scale your operations, saving scraped data to cloud storage (S3, GCS, etc.) can create a new challenge: thousands of small files can cause storage inefficiencies and bottlenecks. Consider using the Result Aggregator feature, which merges results into aggregate files, reducing storage and transfer costs while simplifying data pipeline integration.

Full e-commerce scraping example code

The entire e-commerce web scraper is given below for your convenience. You can use it as a building block for your next e-commerce website scraper. You'll only have to replace the URL and parsing logic with your own.

from bs4 import BeautifulSoup

import requests

username, password = 'USERNAME', 'PASSWORD'

url = "https://sandbox.oxylabs.io/products"

payload = {

'source': 'universal',

'render': 'html',

'url': url,

}

response = requests.post(

'https://realtime.oxylabs.io/v1/queries',

auth=(username, password),

json=payload,

)

print(response.status_code)

content = response.json()["results"][0]["content"]

soup = BeautifulSoup(content, "html.parser")

data = []

for elem in soup.find_all("div", {"class": "product-card"}):

title = elem.find('h4', {"class": "title"}).get_text(strip=True)

price = elem.find('div', {"class": "price-wrapper"}).get_text(strip=True)

availability = elem.find('p', {"class": ["in-stock", "out-of-stock"]}).get_text(strip=True)

data.append({

"title": title,

"price": price,

"availability": availability,

})

print(data)Here’s the output:

Conclusion

So far, you've learned how to scrape e-commerce websites using Python. You also explored Oxylabs' Web Scraper API and learned how to use it for web scraping e-commerce websites with ease. By using the techniques described in this article, you can perform large-scale data extraction for e-commerce on websites with bot protection and CAPTCHA.

New to web scraping? Learn what is web scraping and how to scrape data on our blog.

If you'd like to scrape e-commerce data with Web Scraper API, we have various targets available that you can use to scrape Amazon data, scrape 1688 data, scrape eBay data, and more.

Frequently asked questions

How do I scrape an e-commerce website?

For e-commerce and product details data extraction, you’ll first need to pick a programming language you are most comfortable with. Python, Go, JavaScript, Ruby, and Elixir are popular programming languages with excellent support for large-scale e-commerce data scraping. After that, you’ll have to find the necessary tools and libraries available to help you extract data from the target website. Many e-commerce websites load content dynamically using JavaScript, requiring browser automation tools like Selenium or Puppeteer to scrape effectively. These tools can automate login, data extraction, and handle CAPTCHAs, which are often implemented as anti-scraping measures. You can learn the web scraping best practices here.

Is it ethical to web scrape a website?

How to choose the right web scraping tool?

Can I use Python to scrape e-commerce sites?

What data can I extract from e-commerce websites?

What is an e-commerce price scraper?

About the author

Maryia Stsiopkina

Former Senior Content Manager

Maryia Stsiopkina was a Senior Content Manager at Oxylabs. As her passion for writing was developing, she was writing either creepy detective stories or fairy tales at different points in time. Eventually, she found herself in the tech wonderland with numerous hidden corners to explore. At leisure, she does birdwatching with binoculars (some people mistake it for stalking), makes flower jewelry, and eats pickles.

All information on Oxylabs Blog is provided on an "as is" basis and for informational purposes only. We make no representation and disclaim all liability with respect to your use of any information contained on Oxylabs Blog or any third-party websites that may be linked therein. Before engaging in scraping activities of any kind you should consult your legal advisors and carefully read the particular website's terms of service or receive a scraping license.

Related articles

Try Web Scraper API

Choose Oxylabs' Web Scraper API to unlock real-time product data hassle-free.

Get the latest news from data gathering world

Scale up your business with Oxylabs®

Proxies

Advanced proxy solutions

Data Collection

Datasets

Resources

Innovation hub

Try Web Scraper API

Choose Oxylabs' Web Scraper API to unlock real-time product data hassle-free.