If you work with development (whether part of the team or work in a company where you need to communicate with the tech team often), you’ll most likely come across the term data parsing. Simply put, it’s a process when one data format is transformed into another, more readable data format. But that’s a rather straightforward explanation.

In this article, we'll dig a little deeper into what parsing is in programming and discuss whether building an in-house data parser is more beneficial to a business or whether it is better to buy a data extraction solution that already does the parsing for you.

For your convenience, we also covered this topic in a video format:

What is data parsing?

Data parsing is the process of transforming data from one format to another, making it easier to work with. Parsing typically helps to reorganize unstructured or semi-structured data into a cleaner, more readable format, ready for further operations like data analysis.

For example, consider an HTML document from an e-commerce web page. A raw HTML file contains product titles within the document, along with many other elements, their attributes, CSS codes, and additional data. After parsing, the file would only contain the extracted product titles, making the data file much easier to read and interpret. Here's a basic side-by-side comparison of a single HTML element and its parsed version:

HTML data: <li href="/products/category/xbox-platform/xbox-360" class="css-dpki72 eyah4m91">xbox-360</li>

Parsed data: "xbox-360"

What does a parser do?

A well-made parser will distinguish which information of the HTML string is needed, and in accordance to the parsers pre-written code and rules, it will pick out the necessary information and convert it into JSON, CSV or a table, for example.

It’s important to mention that a parser itself is not tied to a data format. It’s a tool that converts one data format into another, how it converts it and into what depends on how the parser was built. For instance, here's a parser that can convert cURL to JSON, but it could just as easily be designed to convert other data formats like XML to CSV, depending on the specific requirements and configurations defined during development.

Parsers are used for many technologies, including:

Java and other programming languages

HTML and XML

Interactive data language and object definition language

SQL and other database languages

Modeling languages

Scripting languages

HTTP and other internet protocols

To build or to buy?

Now, when it comes to the business side of things, an excellent question to ask yourself is, “Should my tech team build their own parser, or should we simply outsource?”

As a rule of thumb, it’s usually cheaper to build your own, rather than to buy a premade tool. However, this isn’t an easy question to answer, and a lot more things should be taken into consideration when deciding to build or to buy.

Let’s look into the possibilities and outcomes with both options.

Building a data parser

Let’s say you decide to build your own parser. There are a few distinct benefits if making this decision:

A parser can be anything you like. It can be tailor-made for any work (parsing) you require.

It’s usually cheaper to build your own parser.

You’re in control whatever decisions need to be made when updating and maintaining your parser.

But, like with anything, there’s always a downside of building your own parser:

You’ll need to hire and train a whole in-house team to build the parser.

Maintaining the parser is necessary – meaning more in house expenses and time resources used.

You’ll need to buy and build a server that will be fast enough to parse your data in the speed you need.

Being in control isn’t necessarily easy or beneficial – you’ll need to work closely with the tech team to make the right decisions to create something good, spending a lot of your time planning and testing.

Building your own has its benefits – but it takes a lot of your resources and time. Especially if you need to develop a sophisticated parser for parsing large volumes. That will require more maintenance and human resources, and valuable human resources because building one will require a highly-skilled developer team.

Buying a data parser

So what about buying a tool that parses your data for you? Let’s start with the benefits:

You won’t need to spend any money on human resources, as everything will be done for you, including maintaining the parser and the servers;

Any issues that arise will be solved a lot faster, as the people you buy your tools from have extensive know-how and are familiarized with their technology;

It’s also less likely that the parser will crash or experience issues in general, as it will be tested and perfected to fit the markets’ requirements;

You’ll save a lot on human resources and your own time, as the decision-making on how to build the best parser will come from outsourcing.

Of course, there are a few downsides to buying a parser as well:

It will be slightly more expensive;

It’s likely you won’t have much control over it.

Now, it seems that there are a lot of benefits to simply just buying one. But one thing that might make things easier to choose is to consider what sort of parser you’ll need. An expert developer can make an easy parser probably within a week. But if it’s a complex one, it can take months – that’s a lot of time and resources.

It also depends on whether you’re a big business that has a lot of time and resources on its hands to build and maintain a parser. Or you’re a smaller business that needs to get things done to be able to grow within the market.

Join our Discord community

Exclusive events, support from experienced developers, and much more.

How we do it: Web Scraper API

Here at Oxylabs, we have a reliable data gathering tool – Web Scraper API. This tool is specifically built to scrape search engines, e-commerce marketplaces, such as Walmart, real estate platforms, and any other website on a large scale. We covered all the important details about our Web Scraper API and how it works in one of our articles, so make sure to check it out.

Dedicated parser

The dedicated parser enables automated parsing of pre-defined fields from supported websites. It covers the most popular e-commerce websites, like Amazon, eBay, Walmart, and others, and the major search engines, namely Google, Bing, Baidu, and Yandex.

Our dedicated parser handles quite a lot of data daily. In February 2019, 12 billion requests were made! And that’s back in February! Based on our 2019 Q1 statistics, the total requests grew by 7.02% in comparison to Q4 2018. And these numbers continue to rise in accordance with Q2 2019.

Our tech team has been working on this project for a few years now, and having this much experience, we can say with confidence that the parser we built can handle any volume of data one might request.

Custom Parser

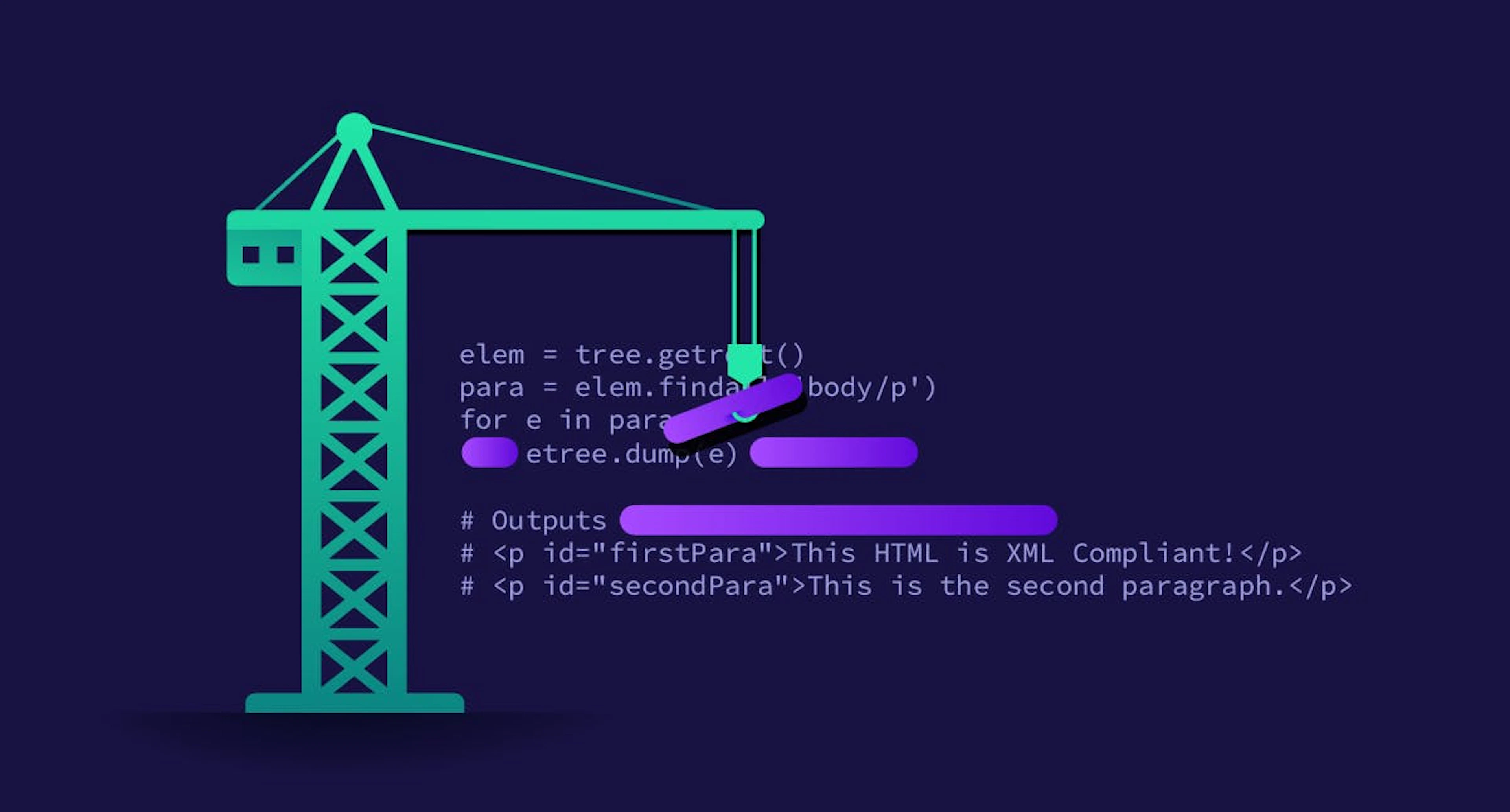

Another free feature of Oxylabs' Web Scraper API is the Custom Parser, which gives full control of the parsing process to the users, providing our clients the much-needed flexibility in their data-gathering efforts. In simple terms, it allows users to write their own parsing instructions for any website. It uses XPath or CSS selectors to navigate the HTML or XML documents and select specific elements.

The Custom Parser makes up for the shortcomings of the dedicated parser, as it enables users to parse data from any website that’s not supported by the dedicated parser. Sometimes, the dedicated parser can’t reach certain information even if the website is supported; thus, the desired data can then be parsed using Custom Parser.

As you can see, it’s not enough to build a parser and hope for the best. The development process is extensive and requires complex solutions. As websites constantly change, you need to continuously maintain and improve your parser to be able to consistently access and parse the data points you want.

However, with Parser Presets you can also save and reuse their parsing instructions across multiple jobs, as well as activating self-healing to automatically adjust your presets as the target website change. This makes managing repetitive or complex parsing tasks much easier and more consistent when working at scale.

So – to build or to buy? Well, building a parser from scratch requires several years of experience, improvements, and constant maintenance to ensure optimal performance – in all honesty, the end result is quite expensive.

Wrapping up

Hopefully, now you have a decent understanding of what is parsing of data. Taking everything into account, keep in mind whether you’re building a very sophisticated parser or not. If you are parsing large volumes of data, you will need good developers on your team to develop and maintain the parser. But, if you need a less complicated, smaller parser – probably best to build your own. Here's a practical tutorial on how to read and parse data with Python.

Also be mindful if you are a large company with a lot of resources, or a smaller one, that needs the right tools to keep things growing.

Oxylabs' clients have significantly increased growth with our Web Scraper API and datasets that offer a ready-to-use collection of public data. If you are also looking for ways to improve your business or have more questions about data parsing, contact our team!

Make sure to check out our blog for more articles on data acquisition and practical tutorials, such as Scraping Baidu Search Results with Python: A Step-by-Step Guide.

People also ask

What tools are required for data parsing?

After web scraping tools, such as a Python web scraper, provide the required data, there are several options for data parsing. BeautifulSoup and LXML are two commonly used data parsing tools.

How to use a data parser?

What is data scraping?

About the author

Gabija Fatėnaitė

Former Director of Product & Event Marketing

Gabija Fatėnaitė was a Director of Product & Event Marketing at Oxylabs. Having grown up on video games and the internet, she grew to find the tech side of things more and more interesting over the years. So if you ever find yourself wanting to learn more about proxies (or video games), feel free to contact her - she’ll be more than happy to answer you.

All information on Oxylabs Blog is provided on an "as is" basis and for informational purposes only. We make no representation and disclaim all liability with respect to your use of any information contained on Oxylabs Blog or any third-party websites that may be linked therein. Before engaging in scraping activities of any kind you should consult your legal advisors and carefully read the particular website's terms of service or receive a scraping license.

Related articles

BeautifulSoup Tutorial - How to Parse Web Data With Python

Adomas Sulcas

2025-12-19

Web Scraper API for smooth data collection

Forget the hassle of building a parser and use our all-in-one product solution instead.

Get the latest news from data gathering world

Scale up your business with Oxylabs®

Proxies

Advanced proxy solutions

Data Collection

Datasets

Resources

Innovation hub

Web Scraper API for smooth data collection

Forget the hassle of building a parser and use our all-in-one product solution instead.