If you’re looking for a way to get public web data regularly scraped at a set time period, you’ve come to the right place. This tutorial will show you how to automate your web scraping processes using AutoScaper – one of the several Python web scraping libraries available.

Before getting started, you may want to check out this in-depth guide for building an automated web scraper using various web scraping tools supported by Python. If you want to learn more complex scraping tactics in general, take a look at this advanced web scraping with Python guide.

You can also check a video tutorial, where we cover the web scraping automation topic and provide even more options on how to do it:

Now, let’s get into it.

Automated web scraping with Python AutoScraper library

AutoScraper is a web scraping library written in Python3; it’s known for being lightweight, intelligent, and easy to use – even beginners can use it without an in-depth understanding of a web scraping.

AutoScraper accepts the URL or HTML of any website and scrapes the data by learning some rules. In other words, it matches the data on the relevant web page and scrapes data that follow similar rules.

Methods to install AutoScraper

First things first, let’s install the AutoScraper library. There are actually several ways to install and use this library, but for this tutorial, we’re going to use the Python package index (PyPI) repository using the following pip command:

pip install autoscraperScraping products with AutoScraper

This section showcases an example to auto scrape public data with the AutoScraper module in Python using the Oxylabs Scraping Sandbox website as a target.

The target website has three thousand products in different categories. As the screenshot shows, the links to all the product category pages are available on the page's left section:

Scraping product category URLs

Now, if you want to scrape the links to the category pages, you can do it with the following trivial code:

from autoscraper import AutoScraper

UrlToScrape = "https://sandbox.oxylabs.io/products"

WantedList = [

"https://sandbox.oxylabs.io/products/category/nintendo",

"https://sandbox.oxylabs.io/products/category/dreamcast"

]

Scraper = AutoScraper()

data = Scraper.build(UrlToScrape, wanted_list=WantedList)

print(data)Note that the Oxylabs Sandbox uses JavaScript to load some elements dynamically, such as the category buttons. Since AutoScraper doesn’t support JavaScript rendering, you won’t be able to scrape all category links. For instance, you can access the “Xbox platform” category but not the subcategories inside it:

With that in mind, the code above first imports AutoScraper from the autoscraper library. Then, we provide the URL from which we want to scrape the information in the UrlToScrape.

The WantedList assigns sample data that we want to scrape from the given subject URL. To get the category page links from the target page, you need to provide two example URLs to the WantedList. One link is a data sample of a JavaScript-rendered category button, while another link is a data sample of a static category button that doesn’t have any subcategories. Try running the code with only one category URL in the WantedList to see the difference.

The AutoScraper() creates an AutoScraper object to initiate different functions of the autoscraper library. The Scraper.build() method scrapes the data similar to the WantedList from the target URL.

After executing the Python script above, the data list will have the category page links available at https://sandbox.oxylabs.io/products. The output of the script should look like this:

['https://sandbox.oxylabs.io/products/category/nintendo', 'https://sandbox.oxylabs.io/products/category/xbox-platform', 'https://sandbox.oxylabs.io/products/category/playstation-platform', 'https://sandbox.oxylabs.io/products/category/dreamcast', 'https://sandbox.oxylabs.io/products/category/pc', 'https://sandbox.oxylabs.io/products/category/stadia']If you need to render dynamic content, you could integrate AutoScraper with a lightweight module like Splash (also see the Scrapy-Splash tutorial) or use more heavy-duty libraries such as Selenium, Playwright, Puppeteer, or others.

Scraping product information from a single webpage

So far, we’ve looked at the method of extracting URLs from a page, but we still need to learn about scraping portions of data. This section discusses the use of AutoScraper to scrape data from a product stored on a specific page.

Say that we want to get the title of the product along with its price; we can train and build an AutoScraper model as follows:

from autoscraper import AutoScraper

UrlToScrape = "https://sandbox.oxylabs.io/products/3"

WantedList = ["Super Mario Galaxy 2", "91,99 €"]

InfoScraper = AutoScraper()

InfoScraper.build(UrlToScrape, wanted_list=WantedList)The script above feeds a URL of a product page and a sample of required information from that page to the AutoScraper model. The build() method learns the rules for scraping information and preparing our InfoScraper for future use.

Now, let’s apply this InfoScraper tactic to a different product’s URL and see if it returns the desired information.

another_product_url = "https://sandbox.oxylabs.io/products/39"

data = InfoScraper.get_result_similar(another_product_url)

print(data)Output:

['Super Mario 64', '91,99 €']The script above applies InfoScraper to another_product_url and prints the data. Depending on the target website you want to scrape, you may want to use the get_result_exact() function instead of get_result_similar(). This should ensure that AutoScraper returns an accurate product title and price as defined by the WantedList.

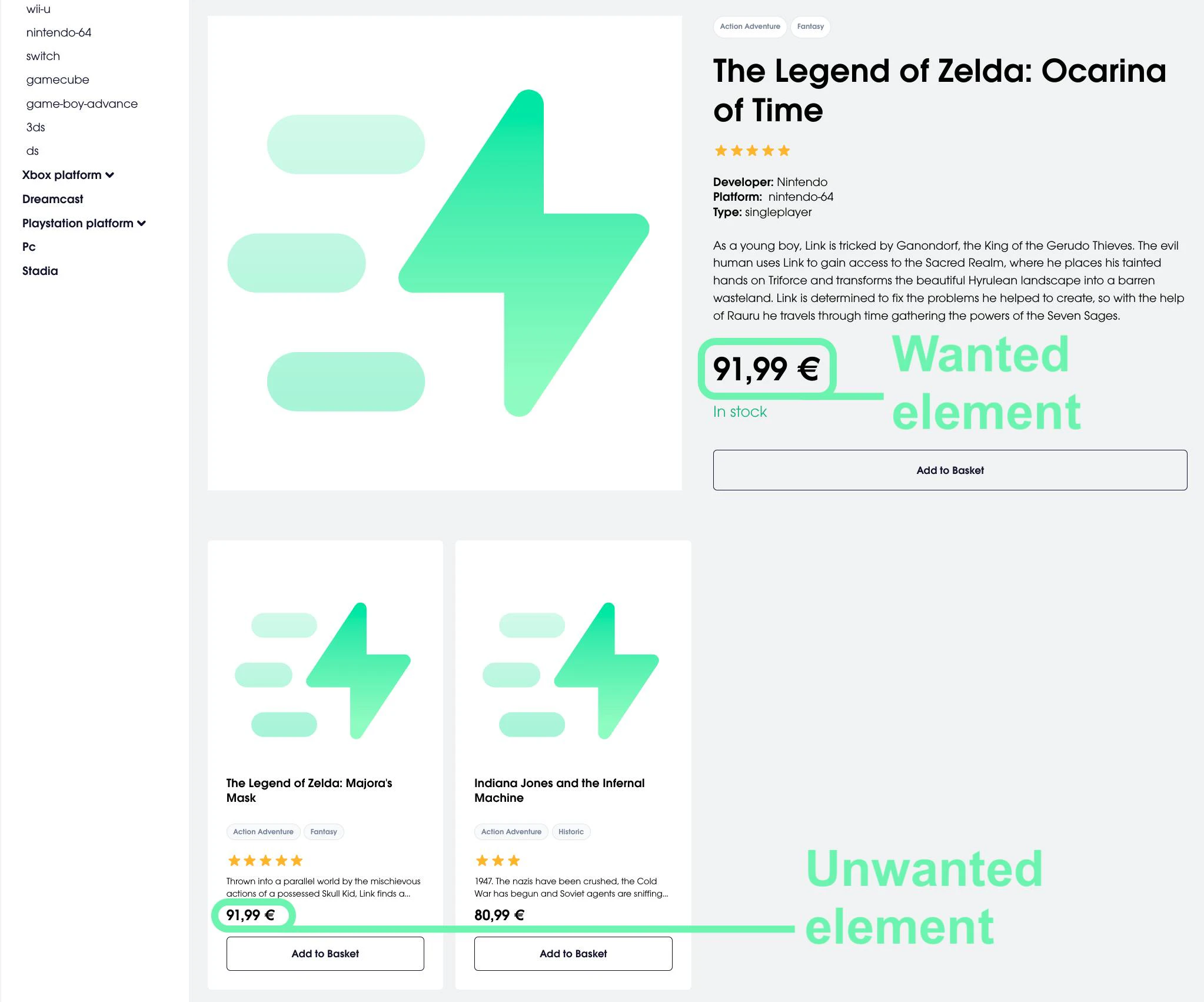

Additionally, it’s very important to provide a UrlToScrape that doesn’t have duplicate data that may match some unwanted elements. Consider this example:

from autoscraper import AutoScraper

UrlToScrape = "https://sandbox.oxylabs.io/products/1"

WantedList = ["The Legend of Zelda: Ocarina of Time", "91,99 €"]

InfoScraper = AutoScraper()

InfoScraper.build(UrlToScrape, wanted_list=WantedList)

another_product_url = "https://sandbox.oxylabs.io/products/39"

data = InfoScraper.get_result_exact(another_product_url)

print(data)Here, the UrlToScrape has the price of 91,99 € twice on the page, as highlighted in the screenshot:

Hence, the code also matches the unwanted element with the price of 91,99 € and additionally returns the price of a related product like this:

['Super Mario 64', '87,99 €', '91,99 €']One way to solve this problem is to use the grouped=True parameter to return the data points with their corresponding AutoScraper rule names. Next, use the keep_rules() function and pass the rules you want to keep. In our code, we have to turn the data dictionary into a list to access and pass over the first and the last rule, containing accurate product title and price:

from autoscraper import AutoScraper

UrlToScrape = "https://sandbox.oxylabs.io/products/1"

WantedList = ["The Legend of Zelda: Ocarina of Time", "91,99 €"]

InfoScraper = AutoScraper()

InfoScraper.build(UrlToScrape, wanted_list=WantedList)

another_product_url = "https://sandbox.oxylabs.io/products/39"

data = InfoScraper.get_result_exact(another_product_url, grouped=True)

print(data)

print()

InfoScraper.keep_rules([list(data)[0], list(data)[-1]])

filtered_data = InfoScraper.get_result_exact(another_product_url)

print(filtered_data)Please note that this method may return incorrect results if the actual price of the product isn’t the last item returned by the data object.

Scraping all the products on a specific category

Until now, we’ve learned to extract similar and exact information from a specific webpage, including URLs. Let’s learn how to scrape data from all the product pages in one specific category. It can be done by using two scrapers: one for scraping URLs of all the products in this category and the other for scraping information from each link.

Install the pandas and openpyxl libraries via the terminal, which we’ll use to save the data to an Excel file:

pip install pandas openpyxlThen, use the following Python script:

#ProductsByCategoryScraper.py

from autoscraper import AutoScraper

import pandas as pd

#ProductUrlScraper section

Playstation_5_Category = "https://sandbox.oxylabs.io/products/category/playstation-platform/playstation-5"

WantedList = ["https://sandbox.oxylabs.io/products/246"]

Product_Url_Scraper = AutoScraper()

Product_Url_Scraper.build(Playstation_5_Category, wanted_list=WantedList)

#ProductInfoScraper section

Product_Page_Url = "https://sandbox.oxylabs.io/products/246"

WantedList = ["Ratchet & Clank: Rift Apart", "87,99 €"]

Product_Info_Scraper = AutoScraper()

Product_Info_Scraper.build(Product_Page_Url, wanted_list=WantedList)

#Scraping info of each product and storing into an Excel file

Products_Url_List = Product_Url_Scraper.get_result_similar(Playstation_5_Category)

Products_Info_List = []

for Url in Products_Url_List:

product_info = Product_Info_Scraper.get_result_exact(Url)

Products_Info_List.append(product_info)

df = pd.DataFrame(Products_Info_List, columns =["Title", "Price"])

df.to_excel("products_playstation_5.xlsx", index=False)The script above has three main constituents: two sections for building the scrapers and the third one to scrape data from all the products in the Playstation 5 category and save it as an Excel file.

For this step, we’ve built Product_Url_Scraper to scrape all the similar product links on the Playstation 5 Category page. These thirteen links are stored in the Products_Url_List. Now, for each URL in the Products_Url_List, we apply the Product_Info_Scraper and append the scraped information to the Products_Info_List. Finally, the Products_Info_List is converted to a data frame and then exported as an Excel file for future use.

Output:

The output reflects achieving the initial goal – scraping titles and prices of all the products in the Playstation 5 category.

Now, we know how to use a combination of multiple AutoScraper models to scrape data in bulk. You can re-formulate the script above to scrape all the products from all the categories and save them in different Excel files for each category.

How to use AutoScraper with proxies

Proxies are an integral part of the web scraping process: acquiring data without using them involves various risks, such as the target website blocking your IP address. To understand the basics, check out what is proxy and learn the differences between various types of proxies, such as residential proxies, datacenter ones, and find out the difference between them and a VPN. Let’s take a look at the process of using proxies with AutoScraper.

The build, get_result_similar, and get_result_exact functions of AutoScraper accept request-related arguments in the request_args parameter.

Here’s what testing and using AutoScraper with proxy IPs looks like:

from autoscraper import AutoScraper

UrlToScrape = "https://ip.oxylabs.io/"

WantedList = ["YOUR_REAL_IP_ADDRESS"]

proxy = {

"http": "proxy_endpoint",

"https": "proxy_endpoint",

}

InfoScraper = AutoScraper()

InfoScraper.build(UrlToScrape, wanted_list=WantedList)

data = InfoScraper.get_result_similar(UrlToScrape, request_args={"proxies": proxy})

print(data)Visit https://ip.oxylabs.io/, copy the displayed IP address, and paste it instead of YOUR_REAL_IP_ADDRESS in the WantedList. This information will be used to tell AutoScraper what kind of data to look for.

The proxy_endpoint refers to the address of a proxy server in the correct format (e.g., http://customer-USERNAME:PASSWORD@pr.oxylabs.io:7777). The script above should work fine when proper proxy endpoints are added to the proxy dictionary. Every time you run the code, it outputs a proxy IP address that should be different from your actual IP.

After you’ve successfully tested the proxy server connection, you can then use your free proxies or any other proxy solution with the initial request like so:

from autoscraper import AutoScraper

UrlToScrape = "https://sandbox.oxylabs.io/products/3"

WantedList = ["Super Mario Galaxy 2", "91,99 €"]

proxy = {

"http": "proxy_endpoint",

"https": "proxy_endpoint",

}

InfoScraper = AutoScraper()

InfoScraper.build(UrlToScrape, wanted_list=WantedList, request_args={"proxies": proxy})

# Remaining code...Saving and loading an AutoScraper model

AutoScraper provides the ability to save and load a pre-trained scraper. We can use the following script to save the InfoScraper object to a file:

InfoScraper.save("file_name")Similarly, we can load a scraper using:

SavedScraper = AutoScraper()

SavedScraper.load("file_name")Now that we’ve built the automated web scraper let’s move to the last portion of the tutorial – managing automation mechanisms.

Alternative options for web scraping automation

This section will discuss the alternatives for scheduling Python scripts on macOS, Unix/Linux, and Windows operating systems.

Say you want your scraper to periodically visit the Playstation 5 category page and scrape every new product uploaded – it can be done by scheduling the ProductsByCategoryScraper.py script. This script, whenever executed, scrapes data from all the products in the Playstation 5 category page and returns data in an Excel file.

You can schedule a Python script through:

Schedule module in Python: tutorial

Adding it to the crontab (cron table): tutorial

Creating a daemon or background service through systemd: tutorial

Task Scheduler in Windows: tutorial

Crontab and systemd (system daemon) methods are specific to the Unix-based operating systems, including Linux and macOS. Meanwhile, the Task Scheduler helps to schedule a Python script on Windows.

Frequently Asked Questions

What is the difference between a web crawler and a web scraper?

Simply put, a web scraper is a tool for extracting data from one or more websites; meanwhile, a crawler finds or discovers URLs or links on the web.

The crawler maps out the structure of a website and identifies pages of interest, which can then be passed on to a scraper for detailed extraction. If you're curious about how to find all links on a website or build your own crawler, we have resources and tutorials available to guide you through the process.

Can you manually edit or remove rules for AutoScraper objects?

Forget about complex web scraping processes

Choose Oxylabs' advanced web intelligence collection solutions to gather real-time public data hassle-free.

About the author

Dovydas Vėsa

Technical Content Researcher

Dovydas Vėsa is a Technical Content Researcher at Oxylabs. He creates in-depth technical content and tutorials for web scraping and data collection solutions, drawing from a background in journalism, cybersecurity, and a lifelong passion for tech, gaming, and all kinds of creative projects.

All information on Oxylabs Blog is provided on an "as is" basis and for informational purposes only. We make no representation and disclaim all liability with respect to your use of any information contained on Oxylabs Blog or any third-party websites that may be linked therein. Before engaging in scraping activities of any kind you should consult your legal advisors and carefully read the particular website's terms of service or receive a scraping license.

Related articles

Pagination In Web Scraping: How Challenging It May Be

Akvilė Lūžaitė

2024-09-11

What is Browser Automation? Definition and Examples

Vytenis Kaubrė

2022-12-15

Get the latest news from data gathering world

Scale up your business with Oxylabs®

Proxies

Advanced proxy solutions

Data Collection

Datasets

Resources

Innovation hub

Forget about complex web scraping processes

Choose Oxylabs' advanced web intelligence collection solutions to gather real-time public data hassle-free.

![Automated Web Scraper With Python AutoScraper [Guide]](https://oxylabs.io/oxylabs-web/ZpBhlx5LeNNTxEcW_eba26704-d9e7-4562-aaf4-0375a6bb9693_Python-Web-Scraping-1200x600.png?auto=format%2Ccompress&rect=0%2C0%2C1200%2C600&w=1200&h=600)