Building Price Scraping Tools: Picking the Best Proxies

Gabija Fatėnaitė

Last updated on

2021-05-13

6 min read

In this day and age, web scraping is becoming a less and less unknown term to many. It is a continually evolving and thriving data extraction method to gather a vast amount of publicly available intelligence in an automated way, for example, using a web scraper.

This article aims to explore more in-depth on how to use proxies for pricing intelligence and other cases, e.g. MAP monitoring. Firstly, we will define the price scraper term. Secondly, we will discuss why price monitoring is essential for business with an online presence. What’s more, we will debate whether a company should build a price scraper in-house or buy an existing web scraping service like e-commerce scraper. Lastly, we will touch on essential price scraper ingredients, and provide insights a business should be aware of when considering using proxies for price intelligence.

Strap in, as it is going to be an informative ride!

What is price scraping?

Price scraping is a process of gathering publicly available product price information from e-commerce websites using web scrapers (price scrapers). While online retailers make constant updates and price changes every day, scraping price intelligence requires reliable solutions to gather accurate and real-time data.

What is a price scraper?

A price scraper is a bot/software, which extracts prices from a website, along with other relevant information from the e-commerce sites.

Price scrapers might seem quite simple at first glance. However, let’s dig a little bit deeper to understand why price scrapers are necessary for businesses with an online presence.

The undeniable reality of the e-commerce game: how to extract competitors’ prices

The paradigm of e-commerce-based business strategies has genuinely evolved. Nowadays, real-time data is dictating the present and foreseeable successful e-commerce business’ policies. One of the most sought-after, real-time information is related to pricing, as it is a crucial aspect that allows to shape the strategy, and win custom. Finding out how to scrape prices from websites is quickly becoming of critical importance to success in the e-commerce business. This is where price scraper tools come in.

According to BigCommerce carried out research the top three factors that influence where Americans shop are the price (87%), shipping cost and speed (80%) and discount offers (71%). What’s more, over 86% of consumers compare prices on different platforms online before purchasing, and roughly 78% of shoppers will pick a cheaper price tag over an expensive one.

It is rather apparent what consumers needs and wants are. But how should businesses address it?

Well, to be price competitive and adjust your pricing strategies accordingly, businesses need to gather intelligence quickly on what their rivalry is doing. That includes pricing strategy, product catalogs, promos, discounts, special offers, to name a few. Companies may also need to conduct e-commerce MAP monitoring to ensure sellers price their products correctly.

Luckily, the knowledge is out there on the internet for the taking. All that a business needs is a price scraper. So, why not find the hidden value behind it, and drive growth to your e-shop?

Price scraper tools – build or buy?

It is a key question that should be raised yet sometimes not enough focus is placed on it by many businesses that are after price intelligence data. Whether a company is only starting to look into web scraping practice or in a case when a business has already chosen one path over another, considering and re-considering this question is a must.

Firstly, let’s cover a scenario where a business decides to build its in-house price scraper to retrieve publicly available data.

Price scraper tools are comprised of many different parts

Building a price scraper

Just like with anything in life, the more you practice, and the more know-how is gathered, the easier it becomes to be successful at it. At first glance, price scrapers might seem like straightforward and simple tools. Of course, it can be, with the necessary knowledge and resources.

However, businesses have to be cautious that every investment needs to be maintained. The reality is that web scraping mechanism will have to updated, renovated, and adjusted to perform as expected. Hence the initial setup costs could differ from what was anticipated. Additionally, expenses might differ wildly based on the intended targets.

Online stores that have been around for a while understand the value of data extremely well, therefore they will often create roadblocks for price scrapers. These roadblocks will include bot detection algorithms, IP blocks or other tools that can prevent price scraping. Additionally, they will eventually update their bot detection algorithms which adds to the development costs of any price scraper tool.

On the subject of maintenance, a business will need dedicated and experienced professionals to oversee the whole operation. It is another cost that should always be included in the bottom line of this option.

What’s more, creating a process to extract prices from websites might mean that a business needs to monitor thousands of pricing intelligence data points. Some of them will be more challenging to target while some of them might leave the front door wide open. Either way, to collect all the necessary data, bring it under one roof in structured datasets, and to do it effectively business might need senior developers to use price scrapers successfully.

Of course, it is only the costs side. What about the time-consuming tasks on the price scraper, and lack of real-time data when a business needs to react promptly to dynamic pricing changes? Admittedly, this could result in lost revenue.

On the contrary, building an in-house data gathering solution means that a business can set it up as desired. It might take time, and sunk costs at first could be daunting. But as mentioned earlier, with the growing know-how, human capital, and most importantly, essential resources, it is more than achievable, and in a long-term could be a much more viable option.

Hopefully, essential resources caught your attention, as in this case, it is related to proxies. Our Enterprise Account Manager’s Vincent Patrizio wise words paint a picture quite accurately:

“If the data acquisition team decides to build their mechanisms to retrieve publicly available data, proxies become the keystone to this process.”

But then it comes to choosing the right ones. There are two main types of proxies the proxy market usually has to offer – datacenter and residential proxies. Of course, there also location based proxies such as USA proxies which are used whenever location sensitive data needs to be accessed.

How proxies work

Choosing proxy providers

The critical point is that it is vital to choose the right proxy provider. Recently, Proxyway published an in-depth market research paper on global proxy service providers, and the report’s performance evaluation section emphasized that it is essential to check proxies for every data source, especially the widely popular ones – no matter whether they offer premium or cheap proxies.

If a business chooses not suitable nor reliable proxies to work with, it will more often than not result in the in-house data retrieval mechanism working unsatisfactorily. In other words, make sure that you fuel business’ data mechanisms engines with the right resources.

If you want to see how a proxy works in action when scraping financial data, check out our guide on how to scrape NASDAQ.

Buying the correct tools for web scraping

By now, you should have a decent understanding that price scraping requires immediate, real-time data to adjust pricing tactics and strategies accordingly.

If a company or business that already is practicing web scraping decides to buy a price scraper tool, there is no need to manage the infrastructure in-house. Consequently, this would allow to grab the desired data promptly and focus on the insights instantly.

However, the first hiccup that might scare away the business decision makers is that the price for these tools isn’t on a shy side. Proxy service providers continually invest in these tools, as well as have to cover on-going maintaining costs.

With that being said, the convenience of getting the data that is needed in a hassle-free manner might justify it. Might, because businesses should still be aware that they need to choose a product that provides exact data that they are after. In some cases, it might be impossible to adjust these tools to work as per business’ needs and wants. Hence, companies should look into flexible options, and most importantly think about the next step – in what format the data will be provided, and how effectively the data can be interpreted?

Here at Oxylabs, we have both options covered. In this instance, we would offer E-Commerce Scraper API (part of Web Scraper API).

Our Web Scraper API is an excellent price tracking tool to meet even the most diverse business requirements. The beauty of the API is that it allows to concentrate on pricing data in a required format immediately and take actionable steps to capture consumers’ attention online instead of using business’ resources on scraping prices from websites. One of the significant features is that our Web Scraper API is specifically adapted to extract data from the major markets in the field. For example, visit this guide to scraping Best Buy to see a code sample.

Here’s what our Account Manager Jonas had to add:

“Tracking prices online may be tricky – the complexity of such a process increases when more marketplaces are monitored. As with any other website, you’ll probably need a unique approach when retrieving the data, thus adjusting the proxies to the process. If there’s a need to scale the pages retrieved without further ado, I would bet on the Web Scraper API. Nobody likes it when proxies get blocked during a more intense crawl, right?”

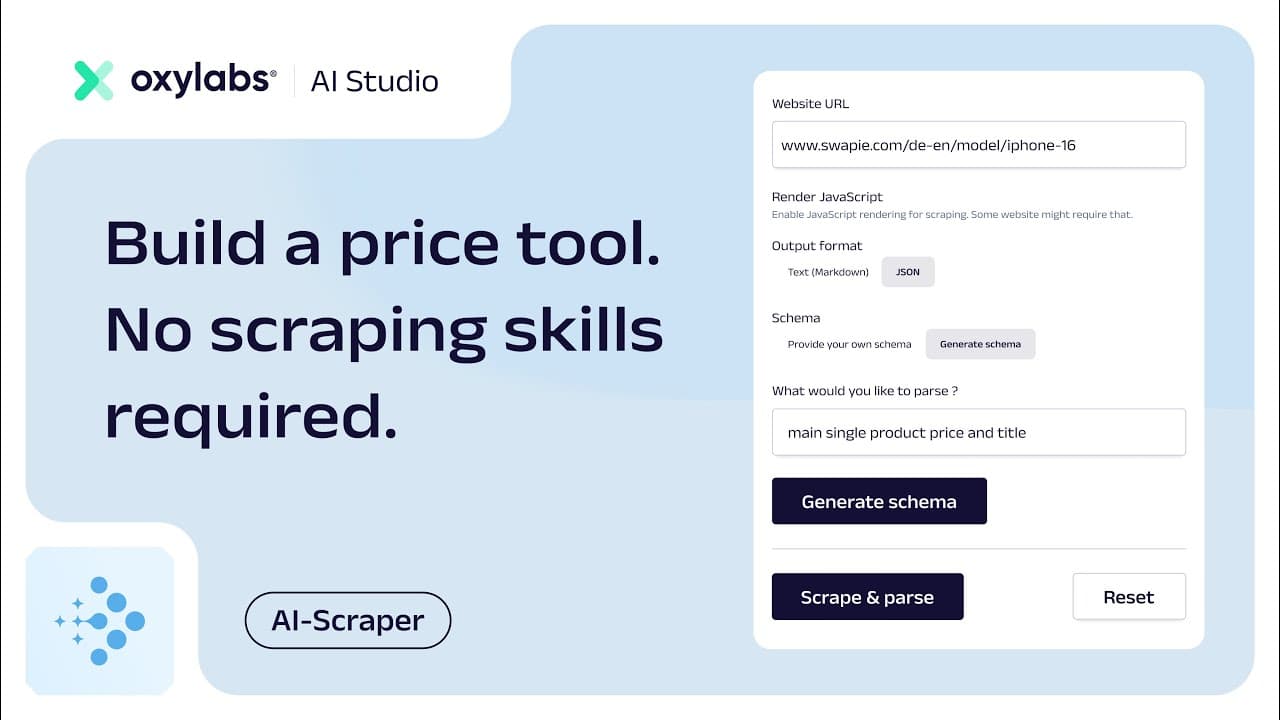

Build a price comparison tool with AI Studio's AI-Scraper

If you're looking for a fast and easy way to build a price comparison tool using natural language, AI Studio's AI-Scraper is your go-to solution. It takes care of both the scraping and parsing processes, so you don’t have to worry about anti-bot systems, writing custom parsing logic for each domain or accessing even hard-to-scrape sites.

To show you how the tool works in practice, we’ve prepared a quick video walkthrough. In it, you’ll learn how to build a simple iPhone 16 price comparison tool by using the app’s user interface and by implementing it with the Python SDK.

Wrapping it all up!

Every experienced e-commerce player will tell you that numbers have a pivotal role to play in their trade. Needless to say, that in order to stay competitive with the ever-growing consumer pricing sensitivity, a business will have to identify those numbers and use them correctly to claim a piece of market share. Using a price scraper is the easiest way to access this share.

However, it all boils down to having the necessary know-how and a robust solution that will allow a business to price scrape efficiently and cost-effectively, which consequently would enable companies to outsmart their competition. Want to know more about how to build a price scraper? Read our Python web scraping tutorial. Lastly, if you are interested in large-scale web scraping tasks, make sure to try our web scraper for free.

Forget about complex web scraping processes

Choose Oxylabs' advanced web intelligence collection solutions to gather real-time public data hassle-free.

About the author

Gabija Fatėnaitė

Former Director of Product & Event Marketing

Gabija Fatėnaitė was a Director of Product & Event Marketing at Oxylabs. Having grown up on video games and the internet, she grew to find the tech side of things more and more interesting over the years. So if you ever find yourself wanting to learn more about proxies (or video games), feel free to contact her - she’ll be more than happy to answer you.

All information on Oxylabs Blog is provided on an "as is" basis and for informational purposes only. We make no representation and disclaim all liability with respect to your use of any information contained on Oxylabs Blog or any third-party websites that may be linked therein. Before engaging in scraping activities of any kind you should consult your legal advisors and carefully read the particular website's terms of service or receive a scraping license.

Related articles

What Is Data Grounding in AI? A Complete Guide

Shinthiya Nowsain Promi

2026-05-22

Public Data Acquisition Solutions for Enterprise: A 2026 Selection Guide

Maryia Stsiopkina

2026-05-04

Get the latest news from data gathering world

Scale up your business with Oxylabs®

Proxies

Advanced proxy solutions

Data Collection

Datasets

Resources

Innovation hub

Forget about complex web scraping processes

Choose Oxylabs' advanced web intelligence collection solutions to gather real-time public data hassle-free.